Why Most Playwright Automation Fails at Scale — And How to Think About Automation Cost Correctly

Playwright is free, but automation is not. Learn the true cost of test automation — creation, maintenance, infrastructure, and trust erosion — and how to evaluate tools correctly.

Automation sounds easy. Playwright is free. AI writes tests.

Right?

That's the promise you see in demos, slides, and tooling comparisons.

But the reality for most teams is very different:

- Writing tests is a meaningful effort (especially early on).

- Keeping them stable and reliable over time is where automation breaks down.

- Most teams underestimate the hidden operational and ongoing costs.

This piece is about understanding automation cost correctly — beyond creation alone — and reframing how teams should evaluate automation tools.

Creation Is a Bottleneck — But It's Not the Whole Story

It's important to be clear: creation is a real bottleneck, especially early in a team's automation journey.

Writing reliable tests involves:

- understanding application state flows

- choosing stable locators

- structuring retries and assertions

- handling dynamic content

- designing tests that don't become brittle

Even with frameworks like Playwright, a 20-step flow can take hours to build manually. In early automation efforts, that's significant friction.

AI-assisted automation can dramatically reduce this creation effort — sometimes turning hours into minutes — but it doesn't fully eliminate the work. Instead, it shifts where the effort is concentrated.

The key point is this:

creation and maintenance both matter — they just matter at different stages. And AI has a bigger impact on reducing creation friction than it does on eliminating long-term maintenance challenges.

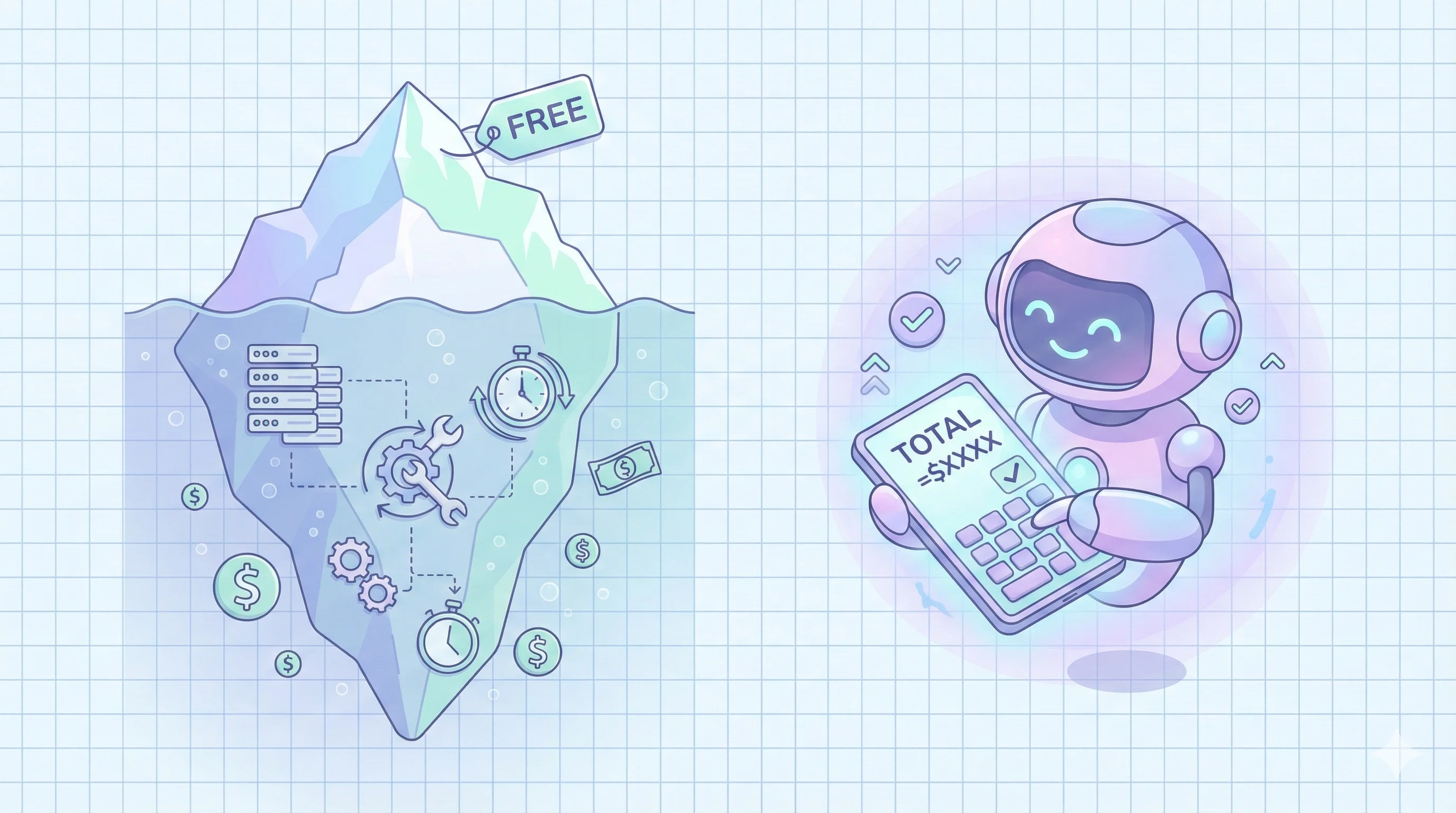

The Free Tool Fallacy

A lot of teams begin with:

"Playwright is open source and free — how expensive can automation be?"

That's technically true for the framework itself.

But automation isn't just the framework:

You also have to build and maintain:

- locator strategies

- retry logic

- browser infrastructure

- CI/CD integration

- failure analysis tooling

- reporting and dashboards

- maintenance workflows

These pieces are neither trivial nor free.

Even with open-source tooling, the effort required to make automation reliable, maintainable, and trustworthy adds up quickly.

Maintenance: Where Automation Often Breaks Down

Almost every team that adopts automation experiences the same shift over time:

Creation feels exciting. Maintenance feels exhausting.

Here's the typical pattern:

- UI changes break selectors

- Flaky tests erode confidence

- Engineers end up debugging tests more than shipping features

- Test suites slow down release cycles

- Teams start ignoring failures

- Regression coverage stops delivering meaningful insight

This isn't creation failure — it's maintenance failure.

And the silent cost here is huge:

- Engineers are taken away from feature work.

- Release confidence erodes.

- The suite becomes a burden, not a support.

This is the point where automation stops being an asset and starts feeling like a cost center.

Infrastructure Cost: Execution Isn't Free

Another blind spot in evaluation discussions is infrastructure.

Many teams forget that running tests has real operational implications:

- parallel execution needs compute resources

- CI runners incur costs

- storage is needed for artifacts, logs, and videos

- reporting layers require compute and design

- scheduling, queuing, retries, and fleet management take effort

In other words:

Execution isn't free — even if the framework is.

To evaluate automation cost properly, teams must account for both:

- creation effort

- ongoing execution and infrastructure cost

Trust Erosion: The Hidden Cost Most Teams Ignore

There's another silent cost that rarely shows up in spreadsheets:

Trust erosion.

The sequence usually plays out like this:

- Tests start failing intermittently

- Developers begin to ignore failure alerts

- CI signals become noise

- Teams ship releases with less validation than intended

At that point, the automation suite stops being a safety net and becomes background static.

A test suite that teams don't trust is worse than no automation at all — because it gives a false sense of quality.

Framework vs Managed Automation: A Strategic Distinction

It's useful to separate two fundamentally different approaches:

| Dimension | Playwright (Framework) | Managed Automation |

|---|---|---|

| Responsibility | Fully your team's | Shared with provider |

| Maintenance | Entirely yours | Reduced or assisted |

| Infrastructure | You provision & operate | Included |

| Reporting | You build & interpret | Built-in |

| Healing | Manual | AI-assisted |

| CI Integration | You set up | Preconfigured |

| Scaling | DevOps burden | Native support |

Frameworks give control. Managed platforms provide operational support and reduced friction.

They shouldn't be evaluated on the same axis.

Your choice should be based not on "which is cheaper today?" but:

- What does your team want to own?

- Where does your engineering investment deliver the most value?

The Three Real Cost Buckets of Automation

When evaluating automation value, focus on these categories:

1. Creation Cost

Time and effort to build reliable tests. Even with frameworks, this can be significant initially.

2. Maintenance Cost

Ongoing updates, fixes, and keeping tests resilient to change. This is where most teams spend the majority of their time after month 1.

3. Trust Erosion Cost

The downstream impact when tests become flaky or noisy. If your team stops trusting the suite, it stops delivering value.

Most teams think heavily about #1 and ignore #2 and #3 — until it's too late.

How to Evaluate Automation Tools Correctly

Here's a practical framework for evaluation:

Look at Cost Over Time, Not Just Month 1

A tool priced at $500/month today might scale with usage — and that can be preferable to fixed headcount costs tied to internal automation roles.

Understand Maintenance Workflow

Ask how brittle the suite is and what mechanisms exist to proactively reduce upkeep.

Evaluate Reporting & Feedback

A test suite that outputs logs is not the same as one that gives decision-ready signals before a release.

Assess Workflow Integration

Triggers, feedback loops, blocking rules, and alerting mechanisms all matter.

Don't Ignore Operational Lift

Calculate time spent week after week keeping tests green — that's where real effort accumulates.

Scaling Anxiety vs Headcount Reality

Teams often fear variable run-based pricing because it feels unpredictable.

But compare:

- Hiring two automation engineers (fixed cost regardless of usage)

Versus

- Paying a variable, usage-aligned cost

In many scenarios, paying per usage scales with actual value delivered, while headcount stays fixed whether the workload is low or high.

Variable cost aligns with usage — and that can be a strategic advantage.

What Matters Most: Release Confidence

At the end of the day, the real KPI for automation is not:

"Cost per month or cost per run"

The real metric is:

Does this tool increase release confidence while lowering long-term maintenance overhead?

A suite that reliably signals quality before release — and does so without constant human firefighting — is what delivers real value.

Final Thought

Automation is not about:

- Writing tests once

- And running them forever without upkeep

Automation is about continuous outcome assurance:

- stability over time

- reduced maintenance drag

- meaningful insights

- business-readable release signals

Playwright is a powerful framework — but frameworks need infrastructure, workflows, monitoring, and heuristics to deliver real automation value.

Free tools don't eliminate complexity — they just change where the complexity lives.

The smarter question isn't:

"Is this cheaper?"

It's:

"Does this increase release confidence while reducing long-term cost?"

And that is the metric that actually matters.

The QAby.AI team helps engineering teams escape the test maintenance trap with AI-powered testing that adapts to your application changes automatically.